Naive Bayes Classifier From Scratch in Python. The Naive Bayes algorithm is simple and effective and should be one of the first methods you try on a classification problem. In this tutorial you are going to learn about the Naive Bayes algorithm including how it works and how to implement it from scratch in Python. Update: Check out the follow- up on tips for using the naive bayes algorithm titled: “Better Naive Bayes: 1. Tips To Get The Most From The Naive Bayes Algorithm”Naive Bayes Classifier. Photo by Matt Buck, some rights reserved.

About Naive Bayes. The Naive Bayes algorithm is an intuitive method that uses the probabilities of each attribute belonging to each class to make a prediction. It is the supervised learning approach you would come up with if you wanted to model a predictive modeling problem probabilistically. Naive bayes simplifies the calculation of probabilities by assuming that the probability of each attribute belonging to a given class value is independent of all other attributes.

This is a strong assumption but results in a fast and effective method. The probability of a class value given a value of an attribute is called the conditional probability. By multiplying the conditional probabilities together for each attribute for a given class value, we have a probability of a data instance belonging to that class. To make a prediction we can calculate probabilities of the instance belonging to each class and select the class value with the highest probability.

Naive bases is often described using categorical data because it is easy to describe and calculate using ratios. A more useful version of the algorithm for our purposes supports numeric attributes and assumes the values of each numerical attribute are normally distributed (fall somewhere on a bell curve). Again, this is a strong assumption, but still gives robust results. Predict the Onset of Diabetes.

The test problem we will use in this tutorial is the Pima Indians Diabetes problem. This problem is comprised of 7.

Pima indians patents. The records describe instantaneous measurements taken from the patient such as their age, the number of times pregnant and blood workup. All patients are women aged 2. All attributes are numeric, and their units vary from attribute to attribute. Each record has a class value that indicates whether the patient suffered an onset of diabetes within 5 years of when the measurements were taken (1) or not (0). This is a standard dataset that has been studied a lot in machine learning literature. A good prediction accuracy is 7.

From the official Python website: 'Python is a programming language that lets you work more quickly and integrate your systems more effectively. Data analysis with Python. For a refresher, here is a Python program using regular. Solving Every Sudoku Puzzle by Peter Norvig In this essay I tackle the problem of solving every Sudoku puzzle. It turns out to be quite easy (about one page of code. Code, Example for PROGRAM TO CALCULATE STANDARD DEVIATION in C Programming.

Below is a sample from the pima- indians. NOTE: Download this file and save it with a . See this file for a description of all the attributes. Handle Data. The first thing we need to do is load our data file. The data is in CSV format without a header line or any quotes.

We can open the file with the open function and read the data lines using the reader function in the csv module. We also need to convert the attributes that were loaded as strings into numbers that we can work with them. Below is the load. Csv() function for loading the Pima indians dataset.

Certificate in Data Science. Acquire Valuable Insights From Data Sets at Any Scale. I received a question from a reader who asked, “Can you calculate volatility in Excel?” The answer is, yes you can, but there are a few things you need to know. The Mann-Whitney U-test is a non-parametric method which is used as an alternative to the two-sample Student's t-test. Usually this test is used. Code, Example for Program to solve the producer-consumer problem using thread in C Programming. Package Weight* Description; 4Suite-XML 1.0.2: 9: An open-source platform for XML processing: aioxmlrpc 0.3: 9: XML-RPC client for asyncio: amara3-xml 3.0.0a9.

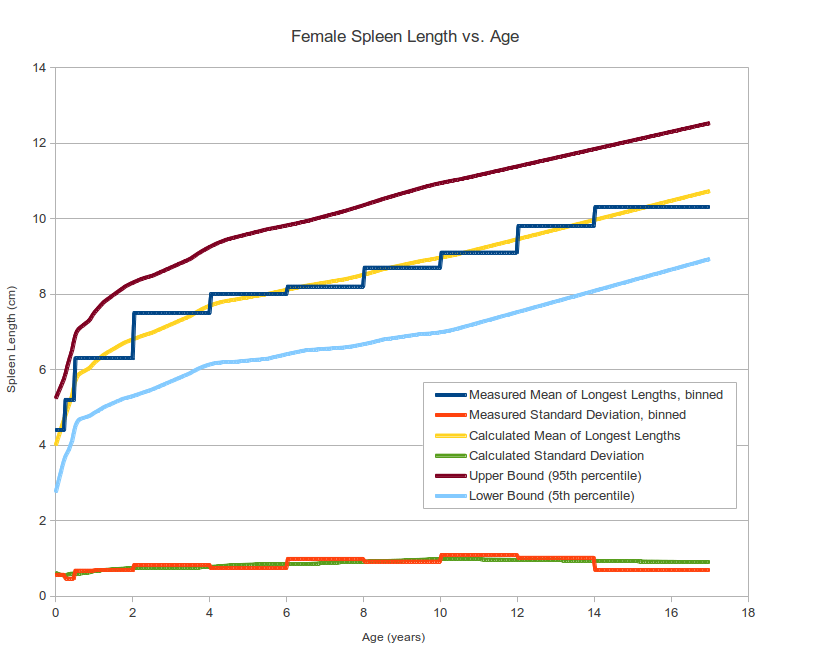

We need to split the data set randomly into train and datasets with a ratio of 6. Below is the split. Dataset() function that will split a given dataset into a given split ratio. Summarize Data. The naive bayes model is comprised of a summary of the data in the training dataset. This summary is then used when making predictions. The summary of the training data collected involves the mean and the standard deviation for each attribute, by class value.

For example, if there are two class values and 7 numerical attributes, then we need a mean and standard deviation for each attribute (7) and class value (2) combination, that is 1. These are required when making predictions to calculate the probability of specific attribute values belonging to each class value. We can break the preparation of this summary data down into the following sub- tasks: Separate Data By Class. Calculate Mean. Calculate Standard Deviation.

Summarize Dataset. Summarize Attributes By Class. Separate Data By Class.

The first task is to separate the training dataset instances by class value so that we can calculate statistics for each class. We can do that by creating a map of each class value to a list of instances that belong to that class and sort the entire dataset of instances into the appropriate lists.

The separate. By. Class() function below does just this. The function returns a map of class values to lists of data instances.

We can test this function with some sample data, as follows. The mean is the central middle or central tendency of the data, and we will use it as the middle of our gaussian distribution when calculating probabilities. We also need to calculate the standard deviation of each attribute for a class value. The standard deviation describes the variation of spread of the data, and we will use it to characterize the expected spread of each attribute in our Gaussian distribution when calculating probabilities. The standard deviation is calculated as the square root of the variance. The variance is calculated as the average of the squared differences for each attribute value from the mean. Note we are using the N- 1 method, which subtracts 1 from the number of attribute values when calculating the variance.

For a given list of instances (for a class value) we can calculate the mean and the standard deviation for each attribute. The zip function groups the values for each attribute across our data instances into their own lists so that we can compute the mean and standard deviation values for the attribute. Then calculate the summaries for each attribute. Make Prediction. We are now ready to make predictions using the summaries prepared from our training data. Making predictions involves calculating the probability that a given data instance belongs to each class, then selecting the class with the largest probability as the prediction. We can divide this part into the following tasks: Calculate Gaussian Probability Density Function. Calculate Class Probabilities.

Make a Prediction. Estimate Accuracy. Calculate Gaussian Probability Density Function. We can use a Gaussian function to estimate the probability of a given attribute value, given the known mean and standard deviation for the attribute estimated from the training data. Given that the attribute summaries where prepared for each attribute and class value, the result is the conditional probability of a given attribute value given a class value.

See the references for the details of this equation for the Gaussian probability density function. In summary we are plugging our known details into the Gaussian (attribute value, mean and standard deviation) and reading off the likelihood that our attribute value belongs to the class. In the calculate. Probability() function we calculate the exponent first, then calculate the main division.

This lets us fit the equation nicely on two lines. In the calculate. Class. Probabilities() below, the probability of a given data instance is calculated by multiplying together the attribute probabilities for each class.

Make Predictions. Finally, we can estimate the accuracy of the model by making predictions for each data instance in our test dataset. The get. Predictions() will do this and return a list of predictions for each test instance. Get Accuracy. The predictions can be compared to the class values in the test dataset and a classification accuracy can be calculated as an accuracy ratio between 0& and 1.

The get. Accuracy() will calculate this accuracy ratio. Tie it Together. Finally, we need to tie it all together. Below provides the full code listing for Naive Bayes implemented from scratch in Python. This can be calculated as the probability of a data instance belonging to one class, divided by the sum of the probabilities of the data instance belonging to each class. For example an instance had the probability of 0.

A and 0. 0. 01 for class B, the likelihood of the instance belonging to class A is (0. Log Probabilities: The conditional probabilities for each class given an attribute value are small.

When they are multiplied together they result in very small values, which can lead to floating point underflow (numbers too small to represent in Python). A common fix for this is to combine the log of the probabilities together.

Research and implement this improvement. Nominal Attributes: Update the implementation to support nominal attributes. This is much similar and the summary information you can collect for each attribute is the ratio of category values for each class.

Dive into the references for more information. Different Density Function (bernoulli or multinomial): We have looked at Gaussian Naive Bayes, but you can also look at other distributions.

Implement a different distribution such as multinomial, bernoulli or kernel naive bayes that make different assumptions about the distribution of attribute values and/or their relationship with the class value. Resources and Further Reading. This section will provide some resources that you can use to learn more about the Naive Bayes algorithm in terms of both theory of how and why it works and practical concerns for implementing it in code. Problem. More resources for learning about the problem of predicting the onset of diabetes. Code. This section links to open source implementations of Naive Bayes in popular machine learning libraries. Review these if you are considering implementing your own version of the method for operational use. Books. You may have one or more books on applied machine learning.

This section highlights the sections or chapters in common applied books on machine learning that refer to Naive Bayes. Get your FREE Algorithms Mind Map.

Sample of the handy machine learning algorithms mind map. I've created a handy mind map of 6. Download it, print it and use it. Download For Free.

Also get exclusive access to the machine learning algorithms email mini- course.

RSS Feed

RSS Feed